A Forbes article by Jason Snyder published last week gives a precise name to a phenomenon I have been observing for a while, and which overlaps significantly with a previous blog post I wrote regarding the repeated patterns of technology failure.

Snyder's central argument is that most enterprises are currently measuring AI activity while expecting AI transformation. Dashboards show rising usage, licences are activated, and presentations signal alignment, but underneath all of that, decision rights remain unclear, incentives haven't changed, and workflows are largely intact.

There are significant overlaps with my previous post, but the Forbes piece focuses specifically on internal enterprise dysfunction, an area I'd touched on but not examined closely. Reading it, I wanted to look at these failures in broader terms (positioning coordination theatre within a wider set of organisational failures), and add two practical elements it doesn't cover: a way to spot coordination theatre when you're inside it, and a framework for what to actually do about it.

What the Forbes Piece Is Actually Arguing

Snyder goes further than just describing the surface symptoms. He draws a distinction between assistive AI and decision-grade AI.

Assistive AI: makes individual tasks faster, e.g. drafting emails, summarising documents, speeding up research. Its value is real, but it stays at the surface.

Decision-grade AI: changes how priorities get set, how resources get allocated, how recurring decisions actually get made.

Most enterprise AI, he argues, has stalled at the assistive layer. Usage is rising, but the underlying decision architecture hasn't moved.

He also outlines why this is so hard to see from inside an organisation. From the executive's point of view, AI adoption looks like it's progressing. But for the people doing the actual work, they often experience something closer to improvisation. When those two realities diverge, that is where coordination theatre lives.

One more dimension he raises that doesn't get enough attention: shadow AI. Employees are using AI outside official channels — personal accounts, browser tools, informal automations — because they're simply better for their actual workflows.

Snyder reads this not as a compliance problem but as a structural signal that the official deployment doesn't match how work actually gets done.

The most precise line in his piece is this: "AI is a mirror inside the enterprise. It does not create dysfunction; it reveals it." AI didn't break these organisations. It made visible what was already broken.

The Disconnect: Two Different AI Conversations

This is something the Forbes piece doesn't directly address, but it's a paradox I encounter constantly in my own work. As an AI consultant I sit at the intersection of two very different conversations.

On one side, I'm regularly talking to business leaders who are struggling with the question of how to use AI effectively in their organisations. They're reading the research, hearing about high failure rates, and wondering whether the investment is worth it. On the other side, I'm using these tools hands-on every day and I know from direct experience how genuinely powerful they are and the real difference they can make.

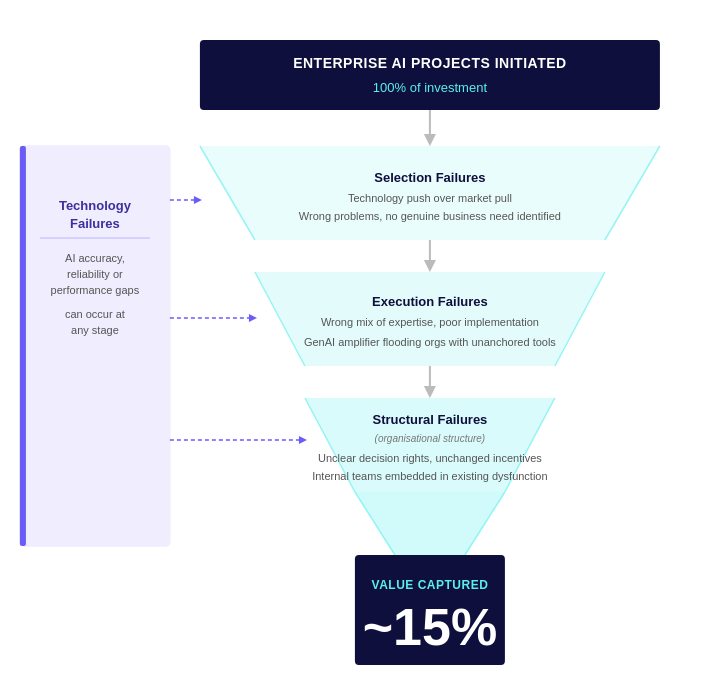

So when I trace down the reasons behind those high failure rates, what I consistently find is that they're rarely down to the AI models themselves. The underlying causes are generally more complex than that, differ significantly from business to business, and are almost always rooted in something organisational rather than something technical. That observation is what prompted me to think about a broader framework for understanding where value actually gets lost.

The data reflects this paradox clearly: Section's 2026 AI Proficiency research suggests that only about 15% of enterprise AI use cases are likely to generate measurable ROI. Meanwhile, in practitioner communities, people doing hands-on work with these same tools are describing genuine, sometimes dramatic productivity gains.

Both groups are right. They're not contradicting each other. Individual and small-team AI is working. People who have rebuilt their personal workflows around these tools are seeing real returns. The failure is not happening at the tool level. It's happening at the organisational level, in the gap between individual capability and collective value capture.

The funnel below should help to illustrate this clearly.

Technology Failures: where AI genuinely doesn't perform accurately or reliably enough, is a real category, and it would be wrong to dismiss it.

Organisation Failures: the majority of value loss happens through organisational failure modes that have nothing to do with whether the technology works.

This is what gets conflated in boardroom discussions: a conclusion that AI is coming up short, when the limiting factor is often the organisational structures around it rather than the technology itself.

This is also why throwing better technology at the problem doesn't move the success rate. You can give an organisation a more capable model or a broader licence, and if the underlying organisational failures are present, the number stays at 15%.

The Wider Context: Why Organisational Failures Are So Persistent

These failure modes aren't random and they aren't new. The types of organisational failures mentioned above (Selection, Execution and Structural Failures) have accompanied every major technology cycle, and AI is no different.

What makes the current wave more acute is that GenAI's low barrier to entry means anyone can build an impressive prototype in an afternoon, which floods organisations with technically functional tools that were never anchored to genuine business need. The result is more activity, more noise, and more material for these failure modes to form around. I explored the underlying dynamics in more detail in my previous post; the point here is simply that these failures are predictable, recognisable, and in many cases avoidable.

How to Spot Coordination Theatre

Being able to spot coordination theatre is the first step to dismantling it, and here are five signals worth examining:

You're tracking activity, not outcomes: Licences activated, prompts submitted, and weekly logins are coordination theatre metrics. They tell you people are using tools, but nothing about whether the business has improved.

Nobody owns the outcome: There is a person responsible for the AI rollout, but there may not be a person accountable for the business result it was supposed to produce.

Incentives are unchanged: People are being asked to adopt new tools while still being measured and rewarded on the outputs of old workflows.

Shadow adoption is spreading: People are using AI outside official channels. The problem isn't the people going around the system — it's what the system is failing to provide.

Roles look the same six months in: If the org chart and the job descriptions are unchanged, the workflows haven't changed either, regardless of what the usage dashboard reports.

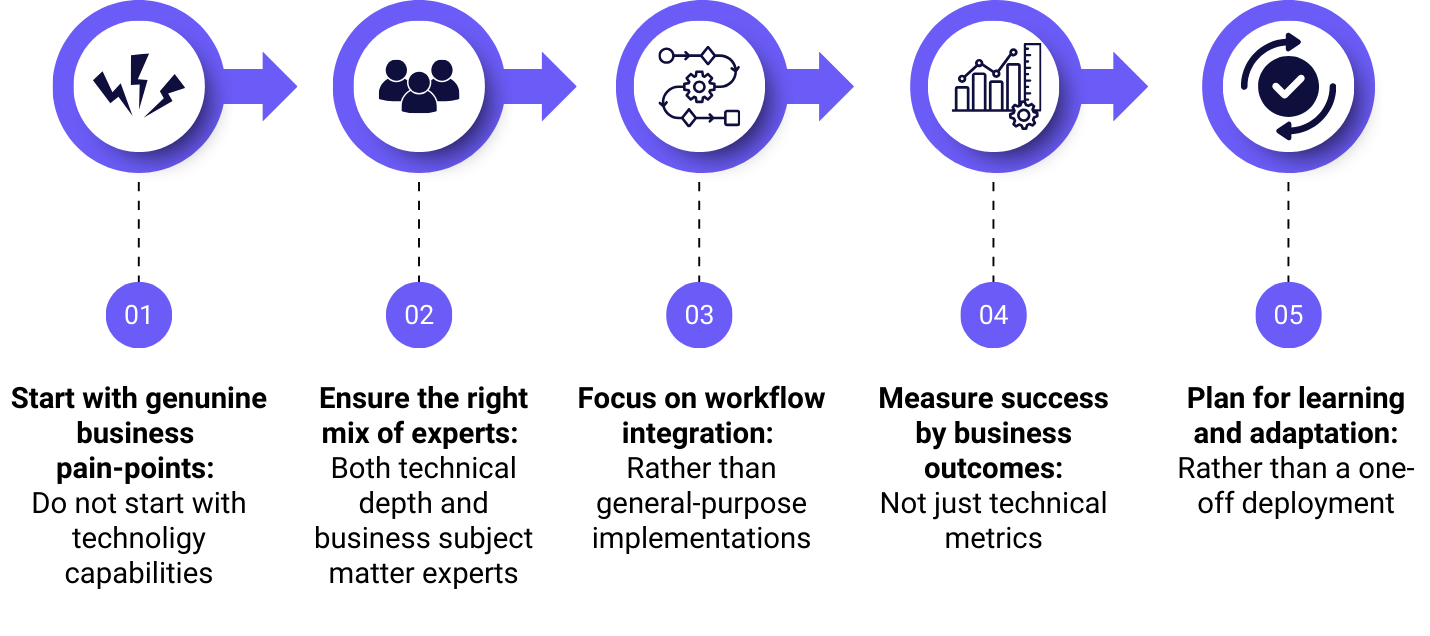

A Framework for Doing This Better

The 15% success rate isn't a fixed feature of enterprise AI. Whether you are trying to escape coordination theatre, avoid a selection failure, or simply build something that generates genuine business value, the same core practices apply.

Final Thoughts

The data is consistent enough at this point that the dysfunction is hard to dismiss. Individuals and small teams are genuinely benefitting from AI, but that value is failing to accumulate at the organisational level for the vast majority of enterprises. Coordination theatre is a significant part of why, but as the funnel illustrates, it sits within a broader set of organisational failure modes, any one of which can stop value from flowing through.

The good news is that the pitfalls are identifiable. Knowing what to look for — whether that's misaligned incentives, activity metrics masquerading as outcomes, or shadow adoption signalling a deeper mismatch — puts you in a much better position than most organisations currently are.

If any of this resonates with what you're seeing in your own organisation, or if you'd like to talk through where you sit relative to these patterns, feel free to get in touch.